eFPGA: Taking the Cost, Size, and Power Consumption out of FPGA Without Sacrificing Speed or Programmability - Blog

June 02, 2023Over the years, FPGAs have made their way into a wide variety of applications – starting from low-volume applications and migrating their way into high-growth areas such as cloud data centers and communications systems. Programmability is key in many situations where algorithms, standards, and customer needs are rapidly changing. However, this advantage has also come at a cost, because FPGAs are power-hungry and take up valuable space.

The Evolution of AI Inferencing - Story

January 19, 2021The AI inference market has changed dramatically in the last three or four years. Previously, edge AI didn’t even exist and most inferencing capabilities were taking place in data centers, on super computers or in government applications that were also generally large-scale computing projects.

The Four Stages of Inference Benchmarking - Story

February 17, 2020This blog discusses how to benchmark inference accelerators to find the one that is the best for your neural network.

Evolution of Embedded FPGA From Aerospace, Networking and Communications to Artificial Intelligence, and More - Blog

June 27, 2019This article will review the various generations of eFPGA, ending with the current features available today.

Taking the Top off TOPS in Inferencing Engines - Story

January 30, 2019With the explosive growth of AI, there has become an intense focus on new specialized inferencing engines that can deliver the performance that AI demands.

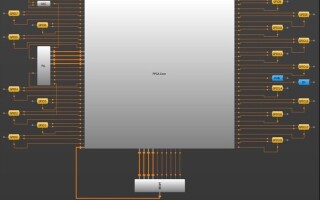

Using embedded FPGA as a reconfigurable accelerator - Eletter Product

December 19, 2017Embedded FPGA is a fairly new technology, but it is quickly finding a home in a range of applications. One use growing in popularity? Connecting it to a processor bus as a reconfigurable accelerator.