The Appeal of Digital Twins Extends to Many Domains

September 20, 2023

Blog

NASA pioneered the digital twin concept, from early space programs to the Mars Perseverance Rover. For NASA’s purposes, a twin on Earth can very accurately mimic a live remote craft.

The digital twin utilizes sensors and other data from that live vehicle to try experiments that would be difficult or dangerous to attempt on the live craft. The principle has extended to earth-bound applications where digital twinning provides a way to test new design options at low cost and low risk, though still at high performance and fidelity.

Digital twins for autonomous cars have been promoted as one example, but the applications are much more varied. Modeling manufacturing assembly lines, networking and data centers are all examples where digital twinning is active today. Particularly in electronic system design, it is becoming obvious that the design and verification of a system component can greatly benefit from digital twin modeling to ensure high confidence in the final product.

An Example Application – Modeling a DPU Design

Architecture advances in data centers are rapidly evolving, with giant farms of GPUs, TPUs, and other specialized accelerators being used for AI training. The training tasks for large language models like ChatGPT are now so complex that they must be distributed both within clusters of accelerators and across the data center. This scale calls for new levels of performance in networking and storage bandwidths and latencies, which has led to a new class of infrastructure hardware known as data processing units (DPUs). These sit next to each compute and storage node, providing richer offload services and distributed resource sharing.

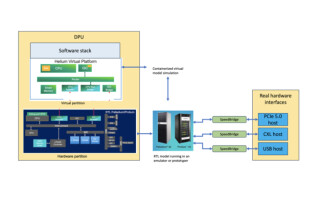

Figure 1. The DPU role in the datacenter

At first glance, the verification task for a new DPU design looks similar to verifying any other SoC, but there are wrinkles. PCIe interfaces will probably be 5.0 compatible as will coherent CXL interfaces and NVMe interfaces to SSD. Verification IP (VIP) for this relatively new standard are well-developed but not perfect, in part because hardware reference implementations are not perfect. All chips come with errata that are difficult or impossible to capture accurately in a VIP. A high-fidelity pre-silicon validation test is only possible when testing directly against those reference boards, an in-circuit emulation (ICE) between the pre-silicon DPU model and real silicon hardware.

At the same time, it is important to remember that much of the value of these new architectures is heavily software-defined. You cannot adequately validate DPU behavior without running at minimum subsets of the DPU application software on the pre-silicon platform. Running software directly in an emulated or FPGA prototype model would be very slow, taking hours simply to boot the OS and run applications. A more practical approach is a virtual prototype running the software stack on an abstracted model of the CPU-centric hardware at near real-time speed, coupled to an emulator or FPGA prototype running the rest of the hardware RTL model.

Building a Digital Twin Model for a DPU

That’s the theory, what about the practice? An important consideration when mixing simulation modeling (usually hardware-assisted), system hardware and software in a twin is managing speed differences between these platforms. Fast though they are, hardware-assist systems like emulators or FPGA prototypes (I’ll use “emulator” for brevity) run at multiple MHz, which is generally slower than the in-circuit reference boards. Speed-matching interfaces manage handshaking between the emulation and external hardware through stalling and queuing.

A similar capability should be available for speed matching between a virtual model running the software stack and emulation. That said, it’s important to ensure that software-only phases of operation can run as fast as possible so that boot or software-intensive phases in application software are not unnecessarily slowed by handshaking with emulation. A hot swap capability between the virtual model and emulation can be very helpful, allowing a boot to run quickly on the virtual model. When finished, the memory and configuration state are copied to the equivalent RTL logic before starting emulation. Care in how the software stack and external traffic exercise the digital twin model can also limit excessive handshaking between virtual and emulation operations.

It's also important to understand debug support in this mixed environment. Problems highlighted in the digital twin are likely to manifest in software misbehavior but have a root cause in hardware. Debug across platforms demands tight coupling to be able to trace effectively between software debug and hardware debug, from local memory addresses through page tables to the hardware memory map and ultimately to a hardware IP.

Figure 2. Verifying the DPU design in-circuit

A system matching these needs is a true twin, a hardware-assisted simulation model connecting to real system hardware and virtual models running OS and legacy software. It will not run quite as fast as the real thing but will run live system testing fast enough, with careful test planning, to provide a much higher level of in-system confidence than you will be able to get from any amount of simulation-only or emulation-only pre-silicon modeling.

Broader Application

Common between this DPU example and other applications is simply that an SoC must be designed (or redesigned) and validated in the context of a larger system environment before committing the design to implementation. The SoC could be intended for a leading-edge HPC application, or it might be targeted to an upgrade for a legacy system. Defense systems, avionics, automotive systems, and medical implants are expected to support lifetimes of 15, 20 or more years. Functional models for replacements or upgrades to these systems must be proven compatible and reliable within the encapsulating real hardware system.

In rapidly evolving systems and upgrades to legacy systems, growing enthusiasm for digital twinning is unavoidable to validate new or replacement designs. Validation against simulated references is no longer sufficient to meet the levels of service, safety, and reliability we demand in such applications. Digital twins have become essential in meeting system-level goals.