NVIDIA DRIVE Map, Hyperion Continues Road to Autonomous Vehicles at GTC 2022

March 25, 2022

Blog

For more than a century, automobiles have been mechanical devices. In the past decade, however, they have become electronic devices. In the next ten years, they will be digital devices designed around robotics and digital twins.

At least if NVIDIA CEO Jensen Huang has his way.

At the 2022 GTC keynote, Huang revealed some major updates to the company’s autonomous vehicle technology roadmap that will accelerate this transition, specifically the DRIVE Hyperion 9 autonomous vehicle reference architecture and NVIDIA DRIVE Map.

Hyperion 9: An Open Reference Architecture for Autonomous Drive

DRIVE Hyperion 9 is an open, next-generation platform designed around the company’s Atlan SoC for intelligent driving and in-cabin functionality. It’s the latest in the DRIVE Hyperion series of automotive and autonomous vehicle development platforms and reference architectures that leverages an interior and exterior sensor suite from leading automotive suppliers such as Continental, Hella, Luminar, Sony, and Valeo.

Its goal is to accelerate the design of Level 4 autonomous drive features and functionality.

Previous versions of the DRIVE Hyperion reference platform, a focal point of the 2021 GTC keynote, included 12 cameras, 12 ultrasonic sensors, nine radars, and one front-facing lidar augmented by sensor abstraction tools that allowed autonomous vehicle manufacturers to customize their designs.

Version 9 builds on that sensor suite with:

- A surround imaging radar

- Updated external cameras with higher frame rates

- Two additional side lidars

- Ultrasonic undercarriage sensors

- Three interior cameras and one radar for occupancy sensing

The DRIVE Hyperion 9 autonomous driving sensor payload now totals 14 cameras, nine radars, three lidars, and 20 ultrasonics.

The Atlan SoC “brain” of the architecture is capable of 1,000 TOPS of performance and delivers a SPECint benchmark score of greater than 100 (SPECrate2017_int). This represents a 4x performance improvement over previous generations at the same power consumption thanks to a new GPU architecture, upgraded Arm CPUs, and deep learning and vision accelerators. The new SoCs also integrate an ASIL-D-rated safety island and an NVIDIA BlueField data processing unit (DPU) for security and cryptographic workload processing in zero-trust deployments.

In all, the Atlan SoC contains ample compute horsepower to run redundant and diverse DNNs for AI-enabled automobiles that can scale into the future.

NVIDIA will begin production on the Atlan SoCs in 2023 and the DRIVE Hyperion 9 platform will be available for development in 2024 for deployment in 2026 production vehicles. Fortunately, the stack is software compatible with existing Orin SoC-based Hyperion platforms, enabling seamless portability of existing code to the new reference architecture’s upgraded hardware.

DRIVE Map: From the Road to the Cloud and Back Again

Beyond local ADAS functions, one of the ways the DRIVE Hyperion platform is projected to enable Level 4+ autonomous driving is through the creation of maps. Specifically, the NVIDIA DRIVE Map.

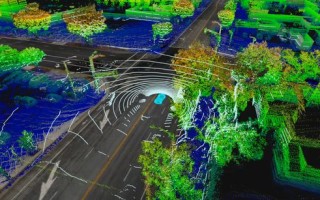

NVIDIA is positioning DRIVE Map as a foundational building block of AI-powered autonomous vehicles. It’s a multi-modal mapping engine and platform capable of updating vehicle maps with real-time, real-world road conditions – including lane locations, obstructions, etc. – at better than 5 cm accuracy.

Of course, an AV’s navigation is only as good as its maps and the maps are only as good as the data they’re based on. DRIVE Map uses a dual-pronged approach to collect ground-truth that consists of crowdsourcing sparse camera data from vehicles on the road today and acquiring detailed 3D lidar and radar data from a mapping fleet equipped with the DRIVE Hyperion platform that’s scheduled to survey more than 300,000 miles of road in North America, Europe, and Asia by 2024.

These inputs are used as a high-definition reference (HDR) for creating 3D navigational maps, a capability based in part on the DeepMap mapping technology NVIDIA acquired last year. This process, pictured below, includes the use of deep neural networks that assist in the creation of automated maps and the training of perception networks that are redeployed to vehicles from the cloud with labeled, up-to-date map data.

A New Way of DRIVE-ing

The NVIDIA’s DRIVE Hyperion 9 and DRIVE Map enable uncanny human-like sensing capabilities. These solutions will unlock a vehicle’s ability to sense its surroundings, whether it be collecting data from its own road sensors, other cars around it, or the cloud.

This GTC22 automotive product release is glimpse into the freedom and safety that will soon facilitate the transition from hands-off to eyes-off to minds-off the road.