Audio Validation in Multimedia Systems and its Parameters

September 28, 2023

Blog

In the massive world of multimedia, sound stands as a vital component that adds depth to the overall encounter. Whether it's streaming services, video games, or virtual reality, sound holds a crucial role in crafting immersive and captivating content. Nevertheless, ensuring top-notch audio quality comes with its own set of challenges. This is where audio validation enters the scene.

Audio validation involves a series of comprehensive tests and assessments to guarantee that the sound in multimedia systems matches the desired standards of accuracy and quality. Market Research Future predicts that the consumer audio market sector will expand from a valuation of USD 82.1 billion in 2023 to approximately USD 274.8 billion by 2032. This growth trajectory indicates a Compound Annual Growth Rate (CAGR) of about 16.30% within the forecast duration of 2023 to 2032.

What is Audio?

Audio is an encompassing term that refers to any sound or noise perceptible to the human ear, arising from vibrations or waves at frequencies within the range of 20 to 20,000 Hz. These frequencies form the canvas upon which the symphony of life is painted, encompassing the gentlest whispers to the most vibrant melodies, weaving a sonic tapestry that enriches our auditory experiences and connects us to the vibrancy of our surroundings.

There are two types of audio.

1. Analog audio

Analog audio refers to the representation and transmission of sound as continuous, fluctuating electrical voltage or current signals. These signals directly mirror the variations in air pressure caused by sound waves, making them analogous to the original acoustic translating the "analogous" nature of sound into electrical signals. When recorded in an analog format, what is heard corresponds directly to what is stored, maintaining the continuous waveforms — but amplitude can differ, which is measured in decibels (dB).

2. Digital audio

Digital audio is at the core of modern audio solutions. It represents sound in a digital format, allowing audio signals to be transformed into numerical data that can be stored, processed, and transmitted by computers and digital devices. Unlike analog audio, which directly records sound wave fluctuations, digital audio relies on a process known as analog-to-digital conversion (ADC) to convert the continuous analog waveform into discrete values. These values, or samples, are then stored as binary data, enabling precise reproduction and manipulation of sound. Overall, digital audio offers advantages such as ease of storage, replication, and manipulation, making it the foundation of modern communication systems and multimedia technology.

Basic Measurable Parameters of Audio

Frequency

Frequency, a fundamental concept in sound, measures the number of waves passing a fixed point in a specific unit of time. Typically measured in Hertz (Hz), it represents the rhythm of sound. Mathematically, frequency (f) is inversely proportional to the time period (T) of one wave, expressed as f = 1/T.

Sample rate

Sample rate refers to the number of digital samples captured per second to represent an audio waveform. Measured in Hz, it dictates the accuracy of audio reproduction. For instance, a sample rate of 44.1kHz means that 44,100 samples are taken each second, enabling the digital representation of the original sound. Different audio sample rates are 8kHz, 16kHz, 24kHz, 48kHz, etc.

Bit depth or word size

Bit depth, also known as word size, signifies the number of bits present in each audio sample. This parameter determines the precision with which audio is represented digitally. A higher bit depth allows for a finer representation of the sound's amplitude and nuances. Common options include 8-bit, 16-bit, 24-bit, and 32-bit depths.

Decibels (dB)

Decibels (dB) are logarithmic units employed to measure the intensity of sound or the ratio of a sound's power to a reference level. This unit allows us to express the dynamic range of sound, spanning from the faintest whispers to the loudest roars.

Amplitude

Amplitude relates to the magnitude or level of a signal. In the context of audio, it directly affects the volume of sound. A higher amplitude translates to a louder sound, while a lower amplitude yields a softer tone. Amplitude shapes the auditory experience, from delicate harmonies to thunderous crescendos.

Root mean square (RMS) power

RMS power is a vital metric that measures amplitude in terms of its equivalent average power content, regardless of the shape of the waveform. It helps to quantify the energy carried by an audio signal and is particularly useful for comparing signals with varying amplitudes.

General Terms Used in an Audio Test

Silence

It denotes the complete absence of any audible sound. It is characterized by a flat line on the waveform, signifying zero amplitude. This void of sound serves as a stark contrast to the rich tapestry of auditory experiences.

Echo

An echo is an auditory effect that involves the repetitive playback of a selected audio, each iteration softer than the previous one. This phenomenon is achieved by introducing a fixed delay time between each repetition. The absence of pauses between echoes creates a captivating reverberation effect, commonly encountered in natural environments and digital audio manipulation.

Clipping

Clipping is a form of distortion that emerges when audio exceeds its dynamic range, often due to excessive loudness. When waveforms surpass the 0 dB limit, their peaks are flattened at this ceiling. This abrupt truncation not only results in a characteristic flat top but also alters the waveform's frequency content, potentially introducing unintended harmonics.

DC-Offset

It is an alteration of a signal's baseline from its zero point. In the waveform view, this shift is observed as the signal not being centred on the 0.0 horizontal line. This offset can lead to distortion and affect subsequent processing stages, warranting careful consideration in audio manipulation.

Gain

Gain signifies the ratio of output signal power to input signal power. Expressed in decibels (dB), it quantifies how much a signal is amplified, contributing to variations in its amplitude. Positive gain amplifies the signal's intensity, while negative gain reduces it, influencing the overall loudness and dynamics.

Harmonics

Harmonics are spectral components that occur at exact integer multiples of a fundamental frequency. These multiples contribute to the timbre and character of a sound, giving rise to musical richness and complexity. The interplay of harmonics forms the basis of musical instruments' distinct voices.

Frequency response

Frequency response offers a visual depiction of how accurately an audio component reproduces sound across the audible frequency range. Represented as a line graph, it showcases the device's output amplitude (in dB) against frequency (in Hz). This curve provides insights into how well the device captures the intricate nuances of sound.

Amplitude response

It measures the gain or loss of a signal as it traverses an audio system. This measure is depicted on the frequency response curve, showcasing the signal's level in decibels (dB). The amplitude response unveils the system's ability to faithfully transmit sound without distortion or alteration.

Types of Testing Performed for Audio

Signal-to-noise ratio (SNR)

Testing the Signal-to-noise ratio (SNR) is a fundamental step in audio validation. It assesses the differentiation between the desired audio signal and the surrounding background noise, serving as a crucial metric in audio quality evaluation. SNR quantifies audio fidelity and clarity by calculating the ratio of signal power to noise power, typically expressed in decibels (dB).

Higher SNR values signify a cleaner and more comprehensible auditory experience, indicating that the desired signal stands out prominently from the background noise. This vital audio parameter can be tested using specialized equipment (like audio analyzers and analog-to-digital converters) and software tools, ensuring that audio systems deliver optimal clarity and quality.

Latency

Latency refers to the time delay between initiating an audio input and its corresponding output. It plays a pivotal role in audio applications where synchronization and responsiveness are critical, like live performances or interactive systems. Achieving minimal latency, often measured in milliseconds, is paramount for ensuring harmony between user actions and audio responses.

Rigorous latency testing, employing methods such as hardware and software measurements, round-trip tests, real-time monitoring, and buffer size adjustments, is essential. Additionally, optimizing both software and hardware components for low-latency performance and conducting tests in real-world scenarios are crucial steps. These efforts guarantee that audio responses remain perfectly aligned with user interactions, enhancing the overall experience in various applications.

Audio synchronization

Audio synchronization is a fundamental element that harmonizes the outputs of various audio channels. This test ensures that multiple audio sources, such as those in surround sound setups, are precisely aligned in terms of timing and phase. The goal is to eliminate dissonance or disjointedness among the channels, creating a unified and immersive audio experience. By validation synchronization, audio engineers ensure that listeners are enveloped in a seamless soundscape where every channel works in concert.

Apart from the above tests we also need to validate audio according to the algorithm used for audio processing.

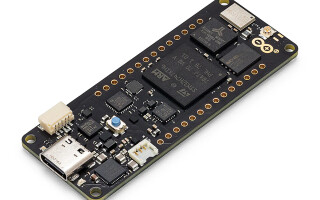

Audio test setup.

Audio Distortions

Phase Distortion

Phase distortion is a critical consideration when dealing with audio signals. It measures the phase shift between input and output signals in audio equipment. To control phase distortion, it's essential to use high-quality components in audio equipment and ensure proper signal routing.

Clipping Distortion

Clipping distortion is another type of distortion that can degrade audio quality. This distortion occurs when the signal exceeds the maximum amplitude that a system can handle. To prevent clipping distortion, it's important to implement a limiter or compressor in the audio chain. These tools can control signal peaks, preventing them from exceeding the clipping threshold. Additionally, adjusting input levels to ensure they stay within the system's operational range is crucial for managing and mitigating clipping distortion.

Harmonic Distortion

Harmonic distortion introduces unwanted harmonics into audio signals, which can negatively impact audio quality. These harmonics can be odd or even, with "odd" harmonics having frequencies that are an odd number of times higher than the fundamental frequency, and “even” harmonics having an even number of times higher frequency. To mitigate harmonic distortion, it's advisable to use high-quality amplifiers and speakers that produce fewer harmonic distortions.

The commonly used test file will have sine tone, sine sweep, pink noise, and white noise.

There are different tools to create, modify, play or analyze audio files. Below are few of them.

Adobe Audition

Adobe Audition is a comprehensive toolset that includes multitrack, waveform, and spectral display for creating, mixing, editing, and restoring audio content.

Audacity

Audacity is a free and open-source digital audio editor and recording application software, available for Windows, macOS, Linux, and other Unix-like operating systems.

There are many devices nowadays involving the application of audio, like headphones, soundbars speakers, earbuds, or devices with audio processors to process different audio algorithms (i.e., Noise cancellation, Voice wake, ANC, etc.).