Tool integration provides AI with the guardrails needed for embedded code generation

May 14, 2026

Story

Embedded software development faces many challenges. Teams are under pressure to build increasingly sophisticated systems in less time. Many of them involve more complex and less predictable behaviors thanks to the inclusion of features that harness machine learning and artificial intelligence (AI). But, unlike counterparts in enterprise software development, embedded systems need to meet stringent safety, security, and reliability requirements.

However, embedded teams tend to approach emerging practices such as AI-assisted development with caution. Concepts like “vibe coding,” where developers iteratively prompt AI to generate code, may be gaining attention, but they are met with understandable skepticism in safety-critical environments.

Sooner rather than later

Research has repeatedly shown that the later a defect is discovered, the more expensive it is to fix. For embedded systems, this challenge is compounded by increasing software complexity. While the ability to update devices after shipment through over-the-air (OTA) updates has become essential for addressing defects and security vulnerabilities, the primary focus for embedded teams remains on preventing these issues earlier in the lifecycle. This is driving greater emphasis on development and testing practices that can identify and resolve problems before deployment, where the cost, risk, and impact are significantly higher.

Shift left

A major element in reconciling the seemingly disparate needs for development speed, flexibility, and safety in embedded systems is to adopt a shift-left strategy based on continuous-integration practices. This strategy promotes the use of testing and verification as early as possible in the flow. Developers do not just write code in this environment—they build unit tests that check whether modules fulfil their objectives. A focus on early integration reduces the number of bugs found late in the cycle.

The greater focus on testing provides opportunities for embedded developers to exploit the productivity improvements that generative AI brings. Practices such as vibe coding, in which programmers express and tune their requirements in successive prompts, have captured much of the publicity around AI code generation. Developers in the embedded space will naturally be skeptical of approaches like this. But goal-driven AI can be a powerful tool in accelerating the generation of correct code.

One of the challenges developers face with AI coding assistants is not simply that the generated code may be incorrect. This is already mitigated through established practices such as unit testing and review, much like code written by less experienced developers. The real issue is scale. AI can generate large volumes of code very quickly and validating that output becomes significantly more demanding.

AI-generated code often looks correct but still requires rework. More than 70% of developers report rewriting or refactoring AI-generated code before production use. In embedded systems, these gaps are more critical, where undetected defects can affect safety and reliability.

Hybrid of AI and deterministic code analysis

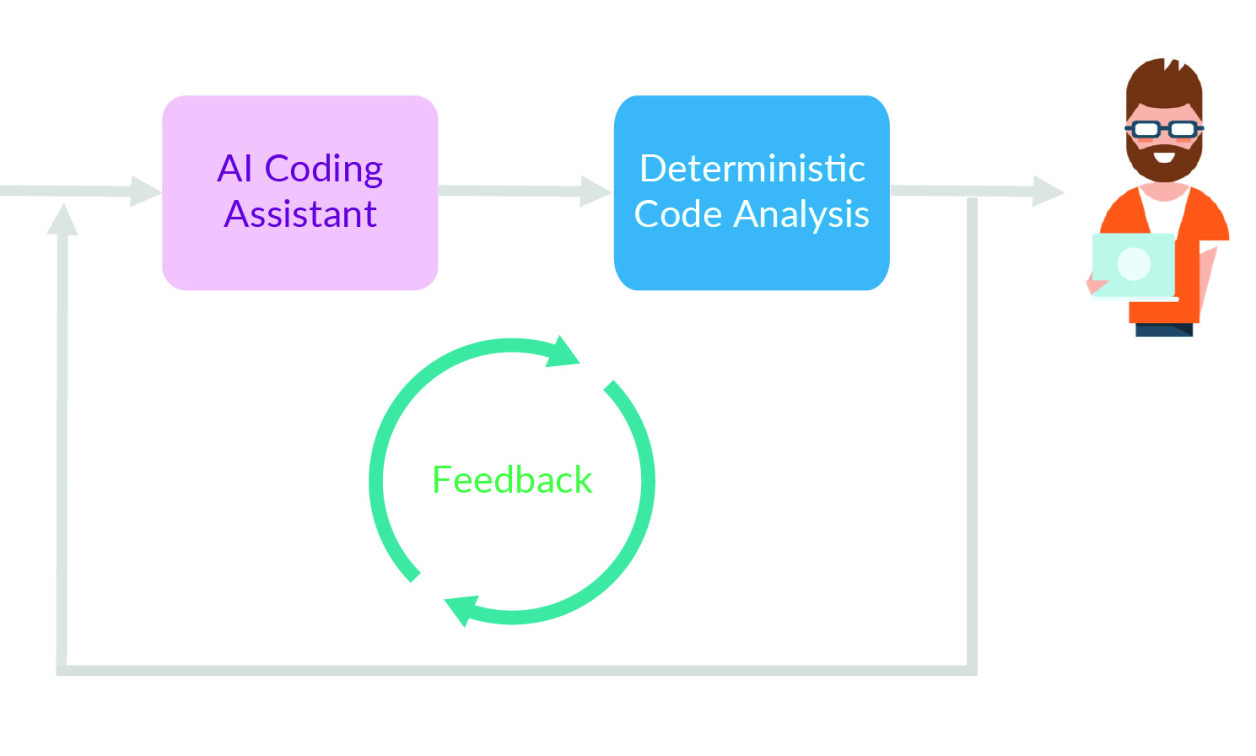

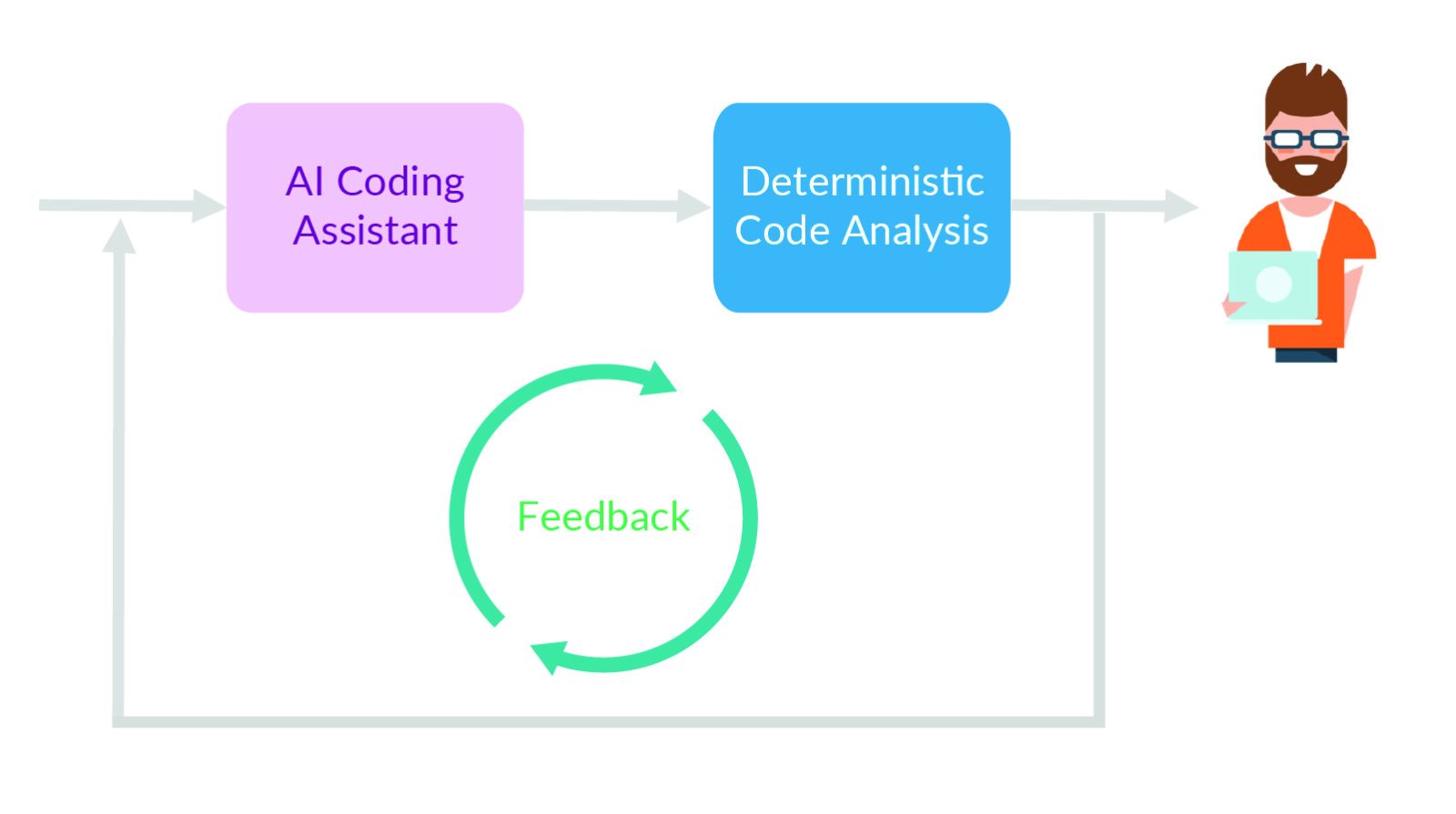

Governing AI through verification

High-volume production of automatically generated code places greater stress on developers. They face the problem of manually inspecting many lines of code that may hide lurking problems. The AI may generate hundreds or thousands of lines of code each day. But the developers cannot keep pace with the rate of generation and maintain sufficient quality using traditional code review practices alone.

For embedded systems, the risks increase dramatically. A crash caused by faulty logic is inconvenient in a consumer app. In a system controlling motors, brakes, medical devices, or industrial equipment, it can create a serious safety hazard. A test-driven framework built for the shift-left environment will massively reduce the risk of code generated from AI prompts. And it provides developers with more time to check the code logic.

Crucially, development teams remain in control of which parts of the project are automated by AI. Those decisions can evolve over time as teams gain confidence in the real-world performance of the tools.

The key

The key piece of the puzzle is to embrace continuous integration practices where developers use system specifications to create unit and integration tests in concert with the software itself. In a shift-left environment, the emphasis is on building tests that simplify integration and check functionality for correctness as more of the application is checked into the codebase. Once tests are written, the continuous integration harness can run them every time there is a change in the software to ensure it has not introduced any bugs.

When tests are built directly from requirements, the primary concern becomes whether the code behaves correctly and can be verified, regardless of whether it was written by a developer or generated by AI. However, the source of the code still matters for traceability, review, and certification. Running AI-generated code against those tests helps ensure it is fit for purpose. Human review can then place greater emphasis on architecture, maintainability, efficiency, and resource usage, while still confirming correctness and compliance. The AI can be given more refined prompts to improve correctness, performance, and overall code quality across these areas.

Tests that are complementary to those that check functionality are equally important. It is easy for security vulnerabilities such as buffer overflows or poor memory usage practices to sneak into code. Similarly, conformance to coding styles will improve overall code safety, security, and reliability. This is where the use of static analysis is crucial.

Today, static analysis can handle far more than conformance with coding styles, such as MISRA, CERT, or AUTOSAR C++14. By performing control flow and data flow analysis, static analysis can identify memory leaks, potential data corruption, unsafe memory usage, race conditions, and common security vulnerabilities such as buffer overflows and injection flaws.

Next-level coding

By running static analysis and unit testing on each code update, the code generated by AI can be driven to a much higher level of quality than is possible using a coding assistant on its own. As AI becomes more ingrained in development, static analysis and test-driven validation become the guardrails that enable teams to build trust in AI-generated code.

MCP tools, enriching AI capabilities

In effect, these quality assurance (QA) practices, particularly static analysis and test-driven validation, become the guardrails for AI. The more code AI generates, the more valuable these automated approaches become. Over time, development teams will gain confidence in what generative AI can achieve.

This confidence can extend to helping build the tests themselves. Generative AI can take as part of its prompt input the specifications and requirements that developers would use to build unit and integration tests. Manual inspection will still be important in key unit tests. But the use of code generation can speed up initial creation. These tests can be iteratively refined by feeding the results of test execution and human review back into the AI, allowing both the tests and the underlying code to improve over time.

There will also be situations where automated test generation can improve overall quality more quickly than is possible using manual methods. Code coverage analysis is a key part of any project that involves high-criticality software. AI can analyze which parts of the application remain uncovered and generate new test cases that exercise those functions more fully. This can help teams satisfy demanding structural coverage objectives, including statement, branch, and MC/DC coverage.

Automation through agents

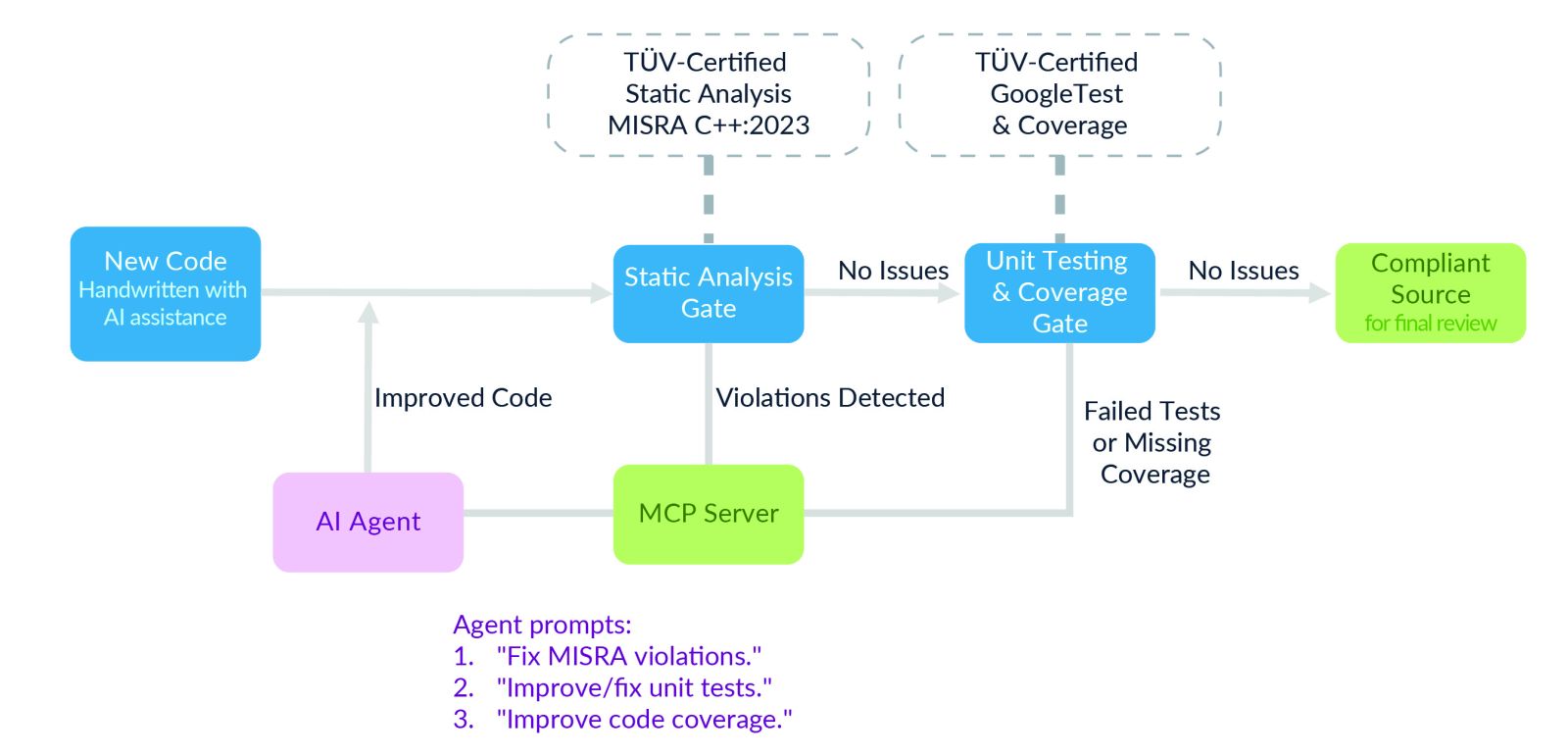

Some organizations are beginning to explore multi-agent workflows, where different AI agents specialize in tasks such as code generation, remediation of static-analysis violations, test creation, and coverage improvement. In safety-critical embedded development, however, these workflows are typically constrained and remain under human supervision.

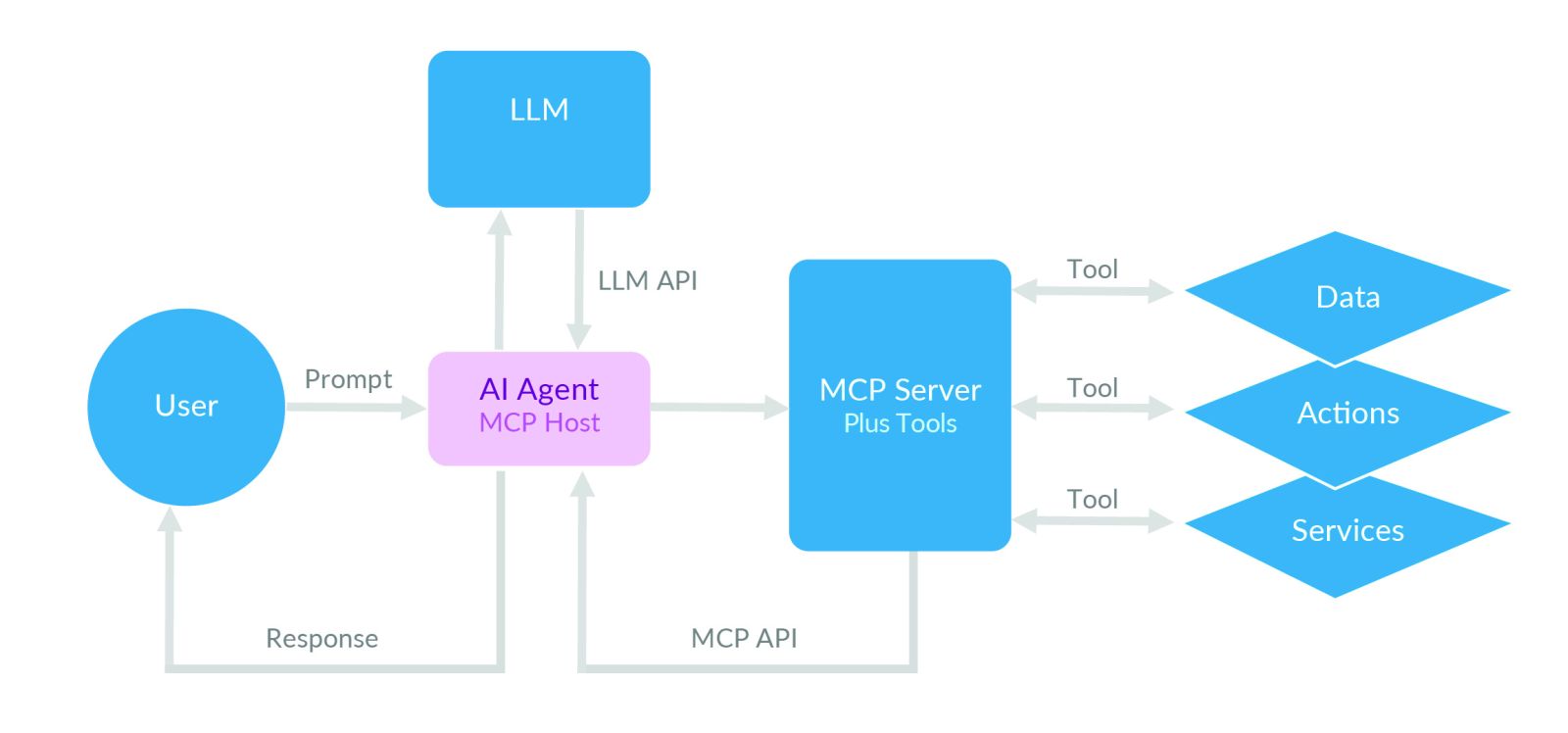

Through mechanisms such as the Model Context Protocol (MCP), software agents can invoke static analysis, unit testing, and coverage tools as part of the development process. MCP provides the structured contextual information needed to ensure that the agents operate within defined boundaries and act on relevant data.

For example, an MCP server can expose static analysis violations, coverage gaps, and requirements traceability information directly to an AI agent. Rather than generating arbitrary code, the agent can use that context to propose fixes, create additional tests, or improve statement, branch, and MC/DC coverage. Developers still review and approve the results before changes are committed.

Generative AI can also assist in identifying the causes of problems found in the field. By analyzing logs, stack traces, and telemetry, AI can help identify likely causes, highlight gaps in test coverage, and accelerate the verification of fixes before they are delivered through an OTA update.

Over time, these techniques may lead to greater levels of automation. But in safety-critical embedded systems, the key is not fully autonomous AI. It is combining AI with static analysis, testing, coverage, and human oversight to create a faster, safer, and more controlled development process.