Accelerate AI Workloads with Rambus HBM4E Memory Controller

March 05, 2026

News

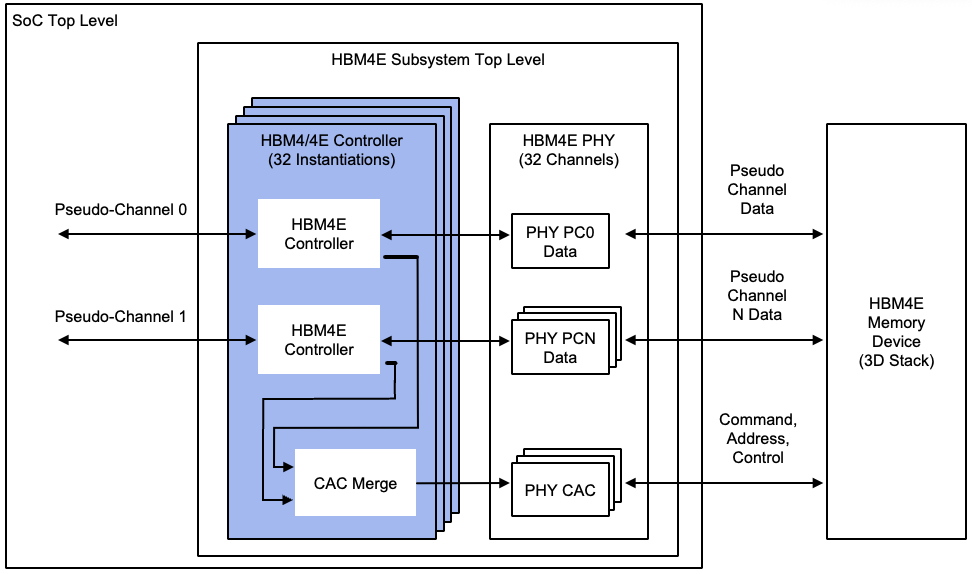

Rambus Inc. announced its HBM4E Memory Controller IP capable of facilitating innovative HBM memory deployments for next-generation AI accelerators, graphics, and HPC applications. It operates at up to 16 Gigabits per second (Gbps) per pin delivering a throughput of 4.1 Terabytes per second (TB/s) to each memory device. For an AI accelerator with eight attached HBM4E devices, this translates to over 32 TB/s of memory bandwidth for demanding AI workloads.

The solution is compatible with third-party standard or TSV PHY solutions to integrate a complete HBM4E memory subsystem into a 2.5D or 3D package for AI SoC or custom die solutions.

“Given the insatiable bandwidth demands of AI, it’s imperative for the memory ecosystem to continue aggressively advancing memory performance,” said Simon Blake-Wilson, SVP and general manager of Silicon IP, at Rambus. “As a leading silicon IP provider for AI applications, we are bringing the industry’s leading HBM4E Controller IP solution to the market as a key enabler for breakthrough performance in next-generation AI processors and accelerators.”

Per the press release, the HBM4E Controller is available for licensing, and early access design customers can engage today.

For more information, visit rambus.com/interface-ip/hbm/.