NVIDIA's $99 Jetson Nano Skimps on Size and Power, But Not Performance

March 19, 2019

Story

The Jetson Nano is an 80 mm x 100 mm developer kit based on a Tegra SoC with a 128-core Maxwell GPU and quad-core Arm Cortex-A57 CPU. It's also just $99.

A few months back I attended a developer meetup at NVIDIA’s Endeavor offices where the company announced its Jetson AGX Xavier module and developer kit. By most embedded engineering standards the Tegra Xavier SoC on the module is the type of processor that beats up microcontrollers and takes their MHz.

With a price tag of $1299, the kit could also beat up some R&D budgets. But I guess that’s the going rate for 32 TOPS performance (they had to cover the cost of the built-in fan, too).

But at the GPU Technology Conference, NVIDIA lowered the bar in terms of power, area, and cost with the release of the Jetson Nano.

Jetson Nano: Priced for Makers, Performance for Professionals, Sized for Palms

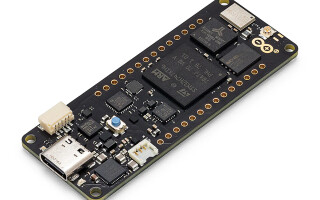

The Jetson Nano is an 80 mm x 100 mm developer kit based on a Tegra SoC with a 128-core Maxwell GPU and quad-core Arm Cortex-A57 CPU. This gives the Nano a reported 472 GFLOPS of compute horsepower, which can be harnessed within configurable power modes of 5W or 10W.

More importantly, this time around NVIDIA has an offering designed for the masses. The Jetson Nano is priced at $99. A production module (70 mm x 45 mm) scheduled for release in Q2 will cost $129 in 1,000-unit quantities.

The price/performance package of Jetson Nano represents an important step forward for pervasive deep learning at the edge. To support these workloads the module also includes 4 GB of 1600 MHz LPDDR4 high-bandwidth memory, which is particularly advantageous for applications like HD computer vision. For video use cases, the platform also supports 4Kp30 encode and 4Kp60 decode.

The Nano’s inferencing performance benchmarks for some popular CV neural networks are provided below.

The I/O also plays a big role in making the NANO accessible to the developer ecosystem. The Nano features a 260-pin SODIMM connector and 40-pin expansion header that make it compatible with a host of peripherals, including many common to the Raspberry Pi and Adafruit communities.

As such, the interfaces on the developer kit are largely what you would expect:

- 4x USB 3.0 (Host), USB 2.0 (Device)

- MIPI CSI-2 x2 (Flex Connector)

- HDMI

- DisplayPort

- Gigabit Ethernet (RJ-45)

- M.2 Key-E with PCIe x1

- MicroSD Card Slot

- Serial (I2C, I2S, SPI, UART, GPIOs)

One Software Stack to Rule Them All

Like its predecessors, the Jetson Nano supports NVIDIA’s JetPack SDK. Version 4.2 of the package includes a Ubuntu-based desktop Linux environment, CUDA 10 support, and cuDNN and TensorRT libraries. Of course, frameworks like TensorFlow, PyTorch, Caffe, MXNet, OpenCV, and the Robot Operating System (ROS) are also supported.

In a separate but related announcement at GTC, NVIDIA unveiled its CUDA-X AI stack, a suite of software acceleration libraries built on top of the company’s CUDA parallel programming model. The stack incorporates:

- cuDNN for deep learning primitives

- cuML for machine learning algorithms

- TensorRT for training models for inference

- 15 additional libraries for data science, graph analytics, etc.

The stack’s components are free for individual download or can be containerized as services in the NVIDIA GPU cloud.

Jetson Nano falls under the AGX box in the bottom right of the diagram. What the diagram tells us is that libraries developed for certain use cases, such as high-performance computing, machine learning, or ray tracing are optimized for use across NVIDIAs GPU hardware. It’s a mix-and-match concept that provides flexibility for developers, while also ensuring consistency from data center-class processors training AI models to the edge platforms like Jetson Nano that will perform inferencing.

This will be an important, and probably overlooked, nuance while NVIDIA attempts to dominate the edge-to-cloud AI infrastructure much as Intel did in enterprise servers and workstations.

Start MAIking

Now that NVIDIA tech is trending towards use in the broader developer community, makers need infrastructure. Here are some resources to get you off the ground:

- Get the kit: https://developer.nvidia.com/buy-jetson

- Download the JetPack SDK: https://developer.nvidia.com/embedded/jetpack

- Projects: https://developer.nvidia.com/embedded/community/jetson-projects

- Jetson Nano Developer Forum: https://devtalk.nvidia.com/default/board/371/jetson-nano/

A good project to help you get started with the Nano is the JetBot, an intelligent mini robot equipped with an 8 MP camera that is capable of object detection, avoidance, and basic navigation. Code for the JetBot is open-sourced on GitHub.

Time to start maiking.